X’s Algorithm Pushes Journalism to the Margins

A study in Nature shows how the “For You” feed favors conservative content, reduces the visibility of traditional media, and systematically shifts political attitudes to the right.

In recent years, I have become increasingly fascinated by the influence of algorithms on journalism. How do they determine what we see? Which voices do they amplify? Which opinions fade into the background? Driven by that curiosity, I read a recent study published in the leading scientific journal Nature, which casts a troubling light on the role of the algorithm behind X (formerly Twitter).

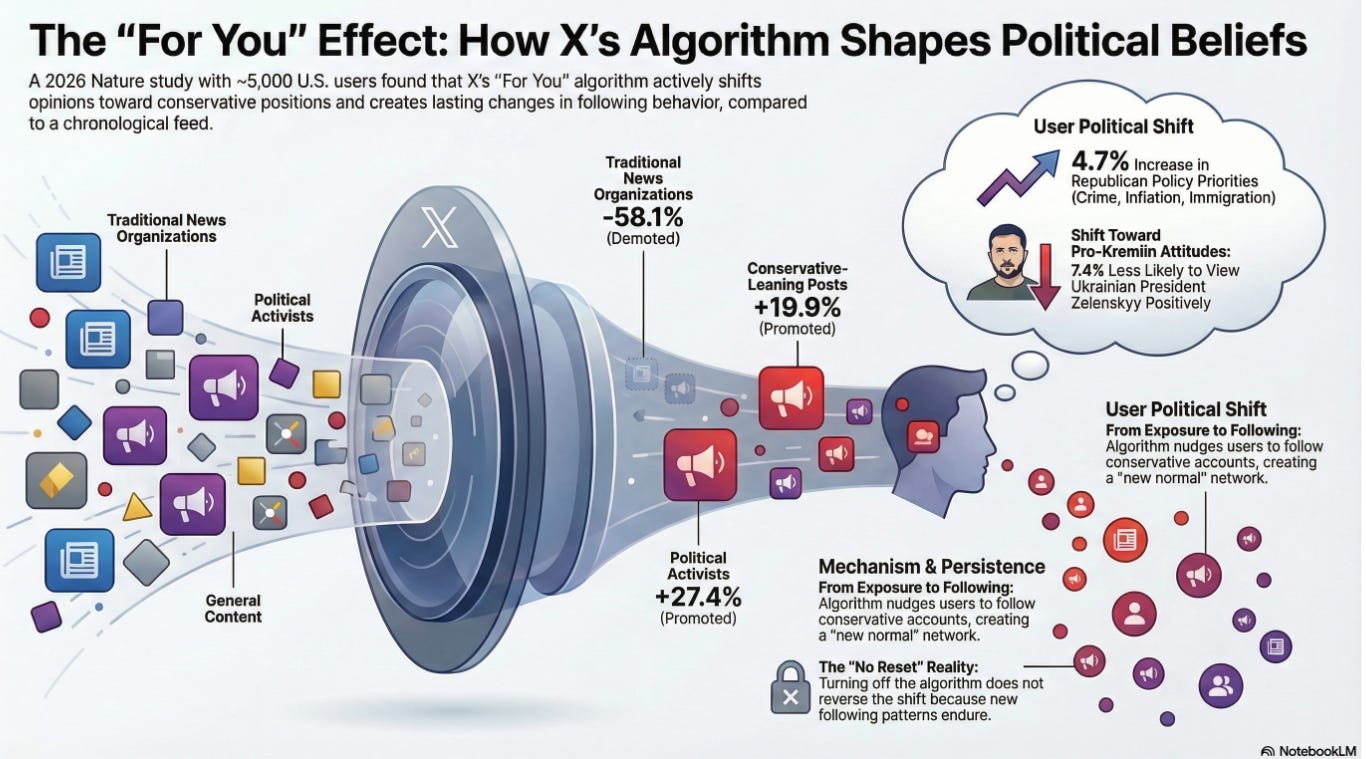

According to researchers from institutions including Bocconi University, the university of St. Gall and the Paris School of Economics, the so-called “For You” feed does not function as a neutral conduit of information. Instead, it acts as a steering, politically colored force that pushes traditional journalism to the margins. Compared with a chronological timeline, the visibility of traditional media accounts in the “For You” feed drops by as much as 58.1 percent. At the same time, political activists gain 27.4 percent more reach, while entertainment content is promoted 21.5 percent more often.

The study shows that users exposed to the algorithmic feed are more frequently confronted with conservative content. Posts with a conservative slant are about 20 percent more likely to be shown by the algorithm, while liberal posts see only a 3.1 percent increase in exposure.

This exposure does not come without consequences: users’ political attitudes measurably shift to the right. The study tracked nearly 5,000 American users over a seven-week period and demonstrates that algorithmic curation has direct effects on public opinion. Users primarily exposed to the “For You” feed paid greater attention to Republican issues such as immigration, inflation, and crime.

They also developed stronger resistance to the criminal investigations into Donald Trump and adopted more pro-Russian attitudes toward the war in Ukraine. Approval ratings for Ukrainian President Volodymyr Zelensky declined by 7.4 percentage points among this group.

Notably, the algorithmic feed appears designed to maximize engagement: posts receive on average 480 percent more likes and 408 percent more reposts than in a chronological feed. What drives interaction, however, does not necessarily foster balanced information.

One of the most concerning findings is that the influence of the algorithm is not easily reversed. When users switch from the algorithmic feed back to a chronological one, the effect does not simply disappear. This is because the algorithm actively encourages users to follow new accounts, particularly conservative political activists. Once followed, these accounts continue to appear in the chronological feed, allowing the algorithm’s influence to persist over time.

The researchers conclude that algorithms on platforms such as X can no longer be regarded as neutral tools. In practice, they function as an editorial power: shaping what people know, who they perceive as adversaries, and which societal issues receive priority.

Now that platforms like X have become critical information infrastructures, the scholars warn that governments must urgently pursue greater algorithmic transparency to safeguard democratic debate and protect the position of independent journalism.

I am more concerned about the 'following' feed. because here I don't see many of my friends' feeds anymore and hardly anybody sees what I post. There has to be something very worrying about the shadowbans.